AI 5.0: Asking Better Questions, Getting Better Answers

I think back to when I first began using AI. My prompts were generic, and the results reflected that. I often found myself revising, correcting, and trying again—sometimes 10 or 12 times (maybe 20…grin)—just to get close to what I needed.

It turns out precision matters.

We must remember that AI is sycophantic—it wants to make you happy. It will go the direction it thinks you want it to go. In reality, it needs direction and precision.

In our last blog, we discussed how to build your personal profile. This is the beginning step to train your AI system to think like you—to think like a rehabilitation counselor. Think of it as the first brick in the foundation. It’s time to start laying the rest of the bricks.

Our next step is to build our prompts—and that begins with understanding what makes a prompt effective.

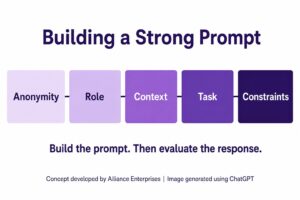

In my mind, there are five parts to a good prompt: begin with anonymity, define the role, explain context, describe the task, and provide constraints.

As rehabilitation counselors, one of the primary reasons many of us are interested in AI is to help deal with the mountain of paperwork. We are looking to create efficiencies in writing intakes, making eligibility decisions, analyzing medical records, writing plans, and drafting case notes. Regardless of the case management system you are using, AI can assist if used properly and ethically.

Step 1 – Protect Confidentiality Through Anonymity

First things first. Unless you are working within a secure, closed system—one owned or managed by your agency—there is risk that anything you enter could be retained or used in Large Language Model training. That means anything not anonymized could violate confidentiality.

Thus, the first step is to anonymize what you write or edit identifying information before entering it into an AI system.

Step 2 – Define the Professional Role

It is important to define who you are and the role you need the AI system to represent. It does not inherently know how to write like a vocational rehabilitation counselor unless it is guided to do so.

For example, “I am a vocational rehabilitation counselor working in a state vocational rehabilitation agency.” Over time, the system will begin to reflect your role and expectations.

Step 3 – Provide Clear Context

Context helps AI understand the counseling situation. It guides the system toward more relevant and meaningful responses.

For example, “I have completed my initial interview with a 25-year-old individual with a C-6 spinal cord injury.” By describing the specific condition, you help the system better align its response to the situation.

Step 4 – Describe the Task Precisely

What are you specifically asking the AI system to do? The more precise you are, the more useful the response will be.

You need to clearly describe what you want the system to produce. For example, “Please identify possible workplace accommodations and assistive technology devices for an individual with a C-6 spinal cord injury seeking employment as a computer software developer.”

This becomes the starting point. Once recommendations are provided, you can continue refining and exploring each option more deeply.

Step 5 – Establish Ethical and Policy Constraints

This is where you guide the process to ensure that what is returned aligns with ethical standards, policy requirements, and legal limitations.

For example, “Recommendations must align with CRCC’s ethical code, ACA guidance on the use of AI, be allowable under the Rehabilitation Act as amended by WIOA and comply with (name your state) policy.”

These types of documents are often reflected in the broader knowledge base of AI systems, but you can strengthen accuracy by explicitly incorporating key guidance into your working environment. I have personally incorporated CRCC’s Frequently Asked Questions and Guiding Statements to Support Rehabilitation Counselors and ACA’s Recommendations for Practicing Counselors and Their Use of AI into my system to help ensure this information is considered.

Evaluate Before You Use

There are two critical issues to consider once you receive a response.

First, remember that AI is designed to be helpful. If it cannot find what you are looking for, it may generate information that appears correct but is not. Your first responsibility is to evaluate the veracity of the response. Is it accurate? Is it valid? Is it true?

Second, AI tends to provide responses that reflect what is typical or average. This can unintentionally limit options for individuals with disabilities. It may not naturally extend beyond conventional expectations unless prompted to do so.

Evaluate whether the response reflects the full range of possibilities. If needed, ask the system to expand its thinking. If we do not push beyond the average, the inherent bias of the system may narrow what is considered possible for the people we serve.

Never settle for the first response. Pursue the best response.

Practical Examples

Each of these examples assumes you are using AI for the first time in a particular task. As your system learns your role, expectations, and patterns, less information will be required.

A case documentation prompt:

“I am a vocational rehabilitation counselor working in a state VR agency. Please review the attached case note for clarity and professional tone consistent with vocational rehabilitation documentation. Offer specific suggestions to improve precision while ensuring all required information is included.”

Plan development prompt:

“I am writing an individualized plan for employment for a consumer interested in information technology with limited work history. Please suggest employment exploration activities that will help this individual better understand required training and typical job tasks.”

A Final Thought

If you are not sure how to structure a prompt, you can ask your AI system to help you write one. By describing your goal and providing basic information, the system can assist in building a more effective prompt.

Conclusion

If we build the right profile and develop thoughtful prompts, AI can become a powerful tool—one that increases efficiency while expanding opportunity for the people we serve.

But we must remember: AI is not a rehabilitation counselor. It cannot replace professional judgment, lived experience, or ethical responsibility.

Precision in what we ask, and discipline in how we evaluate the response, will determine whether AI becomes a shortcut—or a meaningful extension of our practice.

*This blog was edited with the assistance of ChatGPT.